Unlock Performance: A Step-by-Step Guide to Integrating Hugging Face Ollama Models

Are you tired of complex setups and lengthy downloads to get the power of large language models (LLMs) running locally? Hugging Face Ollama is revolutionizing LLM accessibility, offering a streamlined way to deploy and utilize cutting-edge models. This comprehensive guide will walk you through the process of integrating Ollama models into your workflow, empowering you to leverage powerful AI capabilities without the hassle. Whether you’re a developer, data scientist, or simply curious about AI, you’ll gain the skills to harness the potential of these models efficiently. We’ll cover everything from installation to running models and optimizing your deployments.

What is Hugging Face Ollama and Why Use It?

Hugging Face Ollama is a desktop application and command-line tool designed to make running LLMs easier than ever before. It simplifies the process of downloading, caching, and executing powerful models like Llama 2, Mistral, and Gemma directly on your local machine. This eliminates the need for cloud-based APIs or complex server setups, reducing costs and improving latency. Ollama’s focus on ease of use and efficiency makes it an excellent choice for both individual users and developers.

Key Benefits of Using Ollama

- Local Execution: Run LLMs without internet access.

- Simplified Download: Download models with a single command.

- Easy Management: Manage your models with a user-friendly interface.

- Fast Performance: Leverage your local hardware for quicker response times.

- Cost-Effective: Eliminate API costs associated with cloud-based LLMs.

Consider this: a cloud-based API call can cost $0.01 per 1000 tokens. By running models locally with Ollama, you dramatically reduce these expenses, especially for intensive tasks. Many developers are seeing a significant improvement in response times when running models locally with Ollama, particularly with powerful GPUs.

Installation and Setup: Getting Started with Ollama

Getting Ollama up and running is straightforward. The installation process varies depending on your operating system. Head over to the Ollama Download Page for detailed instructions specific to your OS – macOS, Linux, or Windows.

Installation Instructions

- macOS: Download the .dmg file and follow the on-screen instructions.

- Linux: Use your system’s package manager (e.g., `apt`, `yum`, `brew`).

- Windows: Download the installer and run it.

Once installed, open the Ollama application or open a terminal and run the following command to check the version: `ollama –version`. You should see the Ollama version printed.

Running Models with Ollama: A Practical Guide

Now that Ollama is installed, let’s explore how to run models. The command-line interface makes it simple to interact with your AI models. To start, simply type `ollama run ` and press enter. For example, to run the Llama 2 7B model, you would type `ollama run llama2`. Ollama will automatically download the model if it’s not already cached.

Understanding Model Names

Ollama uses a standardized naming convention for models. The most popular choices are `llama2`, `mistral`, and `gemma`. You can find a comprehensive list of supported models on the Ollama Models Page.

The output of running a model typically includes the model’s prompt and the AI’s response. You can refine your prompts for more specific results. Experiment with different prompts to see how the models respond to various inputs.

Optimizing Performance: Maximizing Your Local LLM Experience

While Ollama provides excellent performance, you can further optimize your local LLM experience. This involves leveraging your hardware, managing memory, and choosing the right model for your needs. The Ollama application provides tools for monitoring resource usage and adjusting settings.

GPU Utilization

If you have a compatible NVIDIA GPU, Ollama automatically utilizes it for accelerated inference. You can explicitly specify the GPU device using the `–gpu` flag during the `ollama run` command. For example, `ollama run llama2 –gpu` will utilize your GPU.

Memory Management

LLMs can be memory-intensive. Ollama offers memory limits to prevent crashes or performance degradation. You can adjust the memory limit using the `–memory` flag. For instance, `ollama run llama2 –memory 16g` will allocate 16GB of RAM to the model.

Table: Key Performance Metrics

| Metric | Description | Impact |

|---|---|---|

| Model Size | The size of the model file (e.g., 7B, 13B, 34B parameters). | Larger models generally offer better performance but require more resources. |

| GPU Utilization | Percentage of GPU processing used. | Higher utilization indicates better performance and faster inference. |

| CPU Utilization | Percentage of CPU processing used. | High CPU utilization can indicate a bottleneck. |

Troubleshooting and Common Issues

Like any software, Ollama can sometimes encounter issues. Here are a few common problems and their solutions.

Model Not Found

If you get an error stating that the model is not found, double-check the model name. Ensure it’s spelled correctly and that it’s available on the Ollama Models Page.

Slow Response Times

Slow response times can be caused by insufficient RAM or a slow CPU. Try increasing the memory allocation or closing other applications that are consuming resources. Also, ensure your GPU driver is up to date if you are utilizing GPU acceleration.

If you’re still experiencing issues, consult the Ollama Troubleshooting Guide for more detailed information and potential solutions.

Conclusion: Embracing the Future of AI Accessibility with Ollama

Hugging Face Ollama provides an incredibly convenient and powerful way to integrate LLMs into your workflow. Its ease of use, local execution capabilities, and streamlined management make it a compelling option for developers, researchers, and anyone interested in exploring the potential of AI. By adopting Ollama, you can unlock the power of cutting-edge language models without the complexity and cost of traditional cloud-based solutions. Embrace the future of AI accessibility – start using Ollama today!

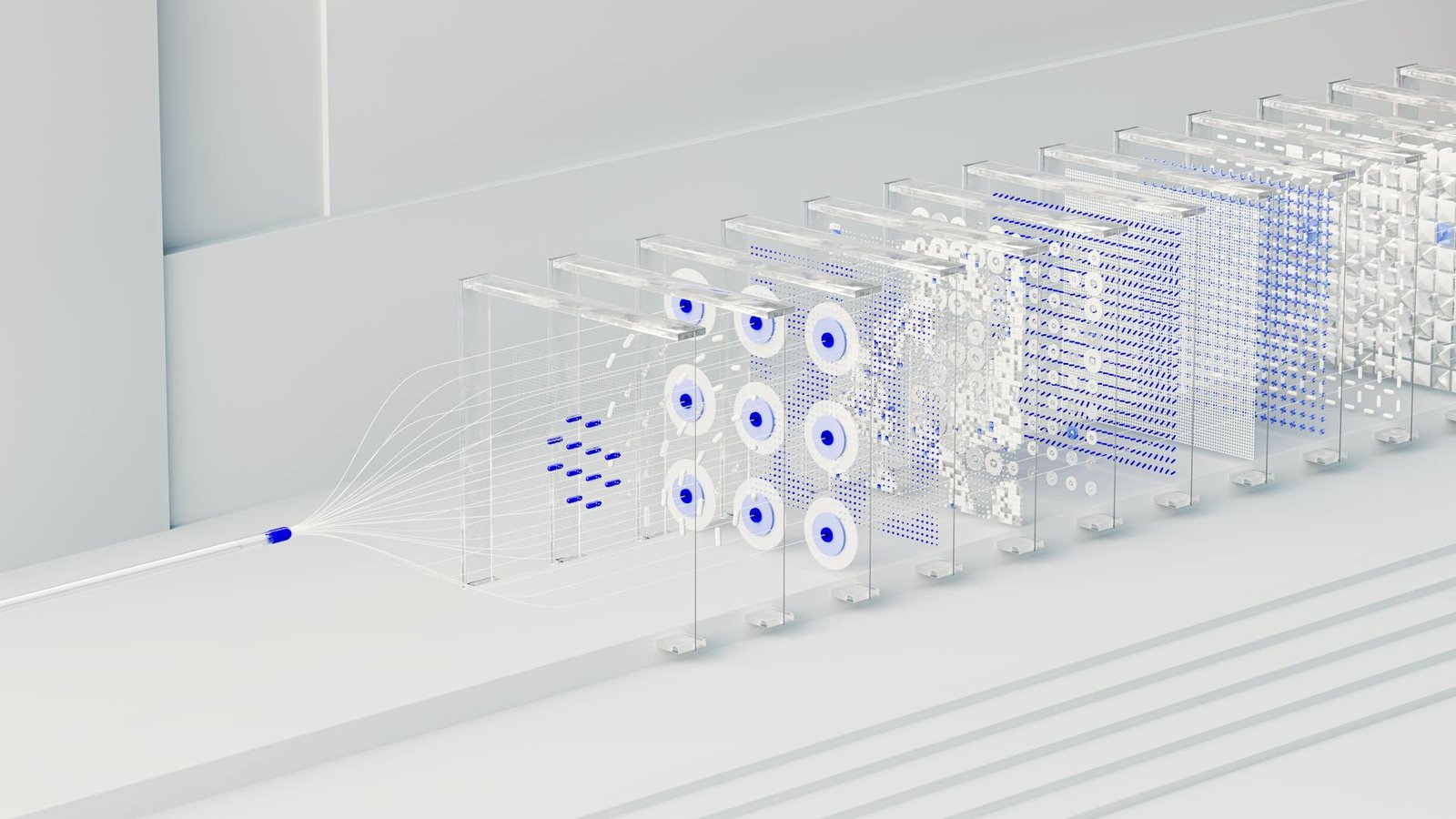

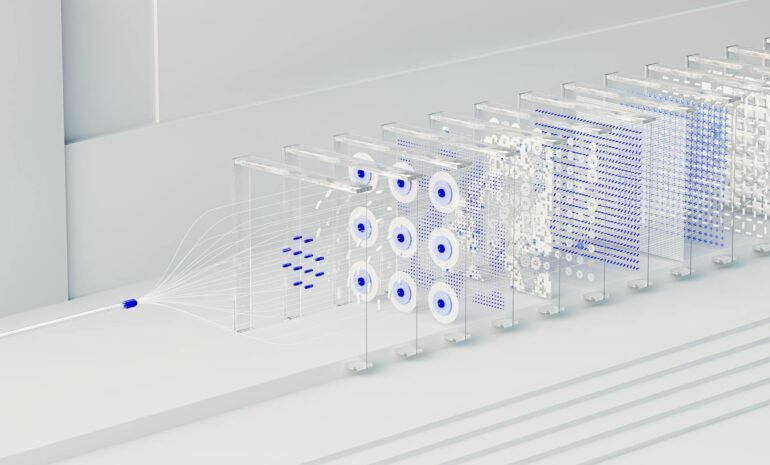

Image by: Google DeepMind