Supercharge Your Machine Learning Pipeline with Hugging Face Ollama Models

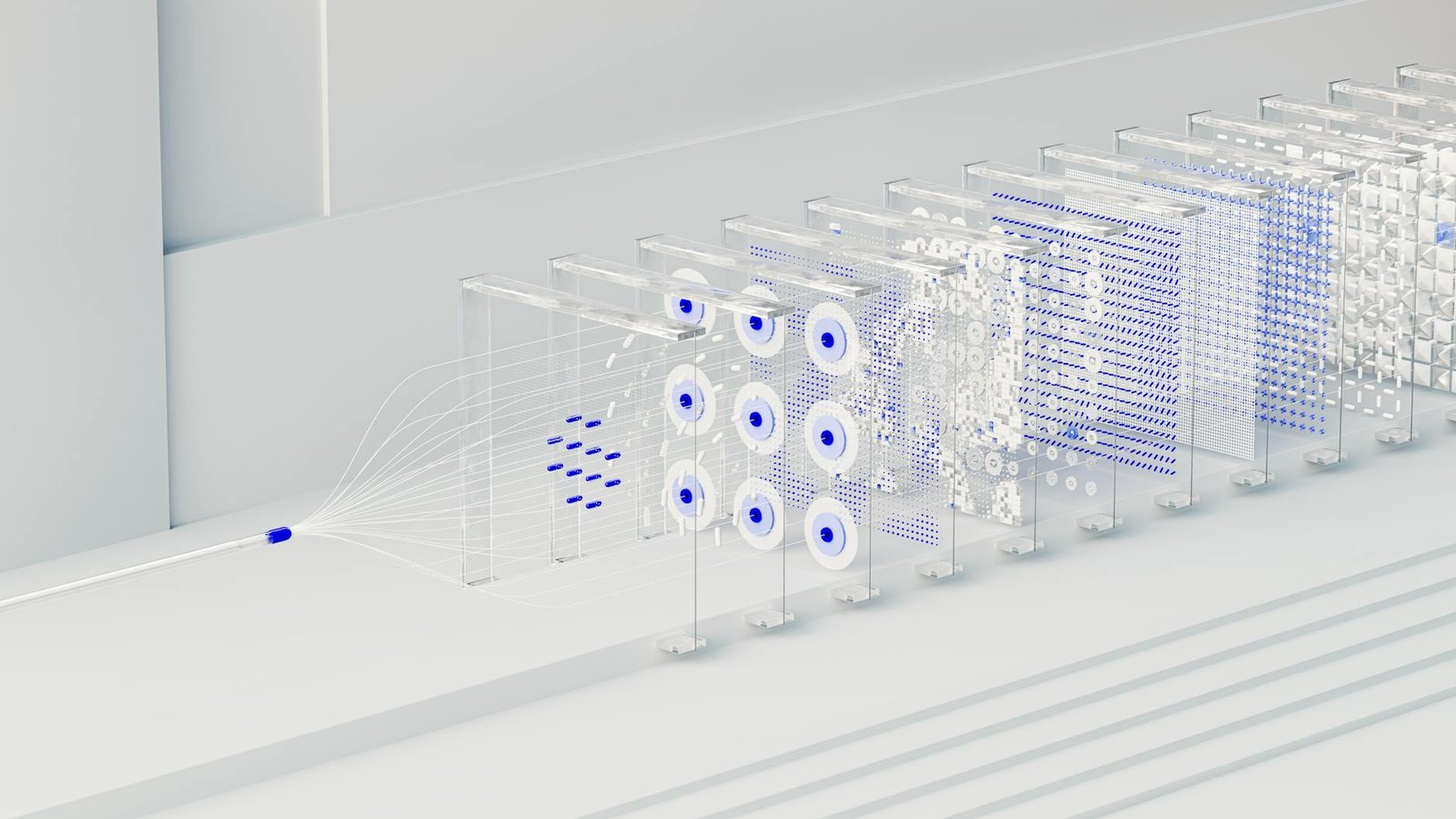

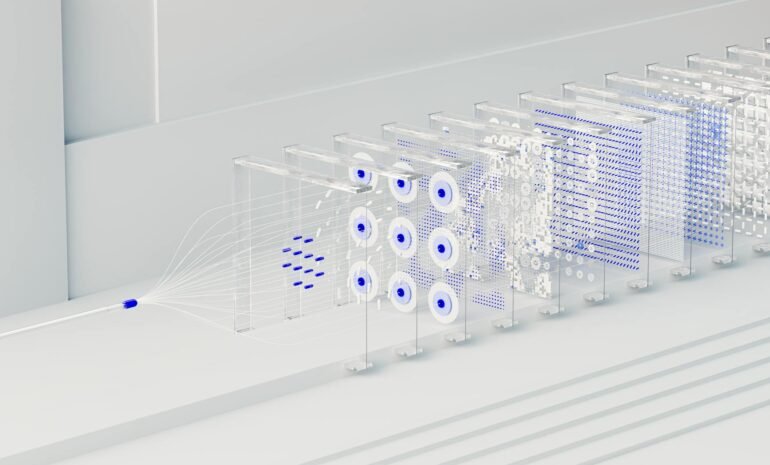

Are you ready to unlock the power of large language models (LLMs) without the hefty infrastructure costs and complex setup? The hype around LLMs is real, powering everything from content generation to code completion. But deploying and managing these models can be a significant challenge. Enter Hugging Face Ollama – a revolutionary tool that makes running powerful LLMs incredibly easy. This tutorial will guide you through leveraging Ollama to dramatically accelerate your machine learning workflows, offering a streamlined and cost-effective solution. You will learn how to easily download, run, and integrate state-of-the-art LLMs into your Python projects, boosting efficiency and unlocking new possibilities for your machine learning endeavors. Prepare to experience faster experimentation, reduced costs, and a more accessible LLM ecosystem.

What are Hugging Face Ollama Models and Why Use Them?

Hugging Face Ollama is a tool that allows you to run large language models (LLMs) locally on your machine. It’s designed for developers and researchers, providing a simple and efficient way to access and use powerful models without needing expensive GPUs or cloud infrastructure. Unlike traditional methods that require setting up complex environments and managing dependencies, Ollama simplifies the entire process. This makes it accessible even to those without extensive machine learning experience. The core benefit lies in reduced costs; you pay only for the computational resources your machine provides. Furthermore, Ollama supports a vast library of models from the Hugging Face Hub, covering a wide range of tasks from text generation and summarization to code generation and question answering. This significantly reduces the barriers to entry for utilizing cutting-edge LLMs.

Benefits of Using Ollama for Machine Learning

Ollama provides several key advantages for integrating LLMs into machine learning pipelines:

- Simplified Deployment: Ollama handles the complexities of model download and execution, allowing you to focus on your machine learning tasks.

- Cost-Effective: You don’t need expensive cloud GPUs or dedicated hardware to run LLMs.

- Easy Integration: Ollama integrates seamlessly with Python and other programming languages.

- Large Model Library: Access to a wide range of open-source LLMs from the Hugging Face Hub.

- Local Execution: Maintain data privacy by running models locally.

For example, a data scientist working on sentiment analysis can quickly test different LLMs without relying on cloud platforms, reducing time to experimentation and potential costs.

Setting Up Ollama – A Step-by-Step Guide

Getting started with Ollama is incredibly straightforward. Here’s a breakdown of the installation process:

- Install Ollama: Open your terminal or command prompt and run the following command:

curl -fsSL https://ollama.com/install.sh | sh. This will download and install Ollama on your system. Make sure you have `curl` installed first if you don’t. - Initialize Ollama: After installation, run

ollama serve. This starts the Ollama server, which manages the models. You should see output indicating that the server is running. - Pull a Model: To use a specific model, use the following command:

ollama pull llama2. This will download the Llama 2 model (or the model you specify) to your machine. You can find a list of available models on the Ollama website: https://ollama.com/models.

Once the model is pulled, you can run it with ollama run llama2. This command will start the model and you can interact with it through the command line. This simplicity is a major advantage over other LLM deployment methods.

Integrating Ollama with Python for Machine Learning

Ollama provides a powerful Python API that makes it easy to integrate LLMs into your machine learning workflows. You can use this API to perform various tasks, including generating text, summarizing documents, answering questions, and more. Here’s a basic example of how to use the Ollama API to generate text:

import ollama

# Specify the model you want to use

model = "llama2"

# Generate text

prompt = "Write a short story about a robot learning to love."

response = ollama.generate(prompt, model=model)

# Print the response

print(response)

The `ollama.generate()` function takes the prompt and the model name as arguments. It returns a string containing the generated text. You can customize the generation parameters, such as temperature and max_tokens, to control the randomness and length of the output. Further exploration of the Ollama documentation will unlock more advanced possibilities.

| Parameter | Description |

|---|---|

| temperature | Controls the randomness of the output (0.0-1.0). Higher values lead to more creative, but potentially less coherent, text. |

| max_tokens | The maximum number of tokens to generate in the response. |

This API allows direct connection to the model without requiring external libraries or complex configuration.

Advanced Techniques and Model Selection

While the basics are simple, Ollama offers various advanced techniques for optimizing LLM performance. One important consideration is choosing the right model for your task. Different models are trained on different datasets and have different strengths. For example, `llama2` is a strong general-purpose model, while specialized models like `mistral-7b-instruct` are fine-tuned for instruction-following tasks. You can explore the model catalog on the Ollama website to find the best model for your needs. Experimentation is key to finding the optimal model for your specific machine learning problem.

Fine-tuning with Ollama

Ollama also supports fine-tuning LLMs on your own datasets. This allows you to customize the models to perform better on specific tasks. The process involves providing Ollama with your training data and using the `ollama fine-tune` command. This is a more advanced technique that requires some experience with machine learning, but it can significantly improve model performance. A significant advantage is the ability to adapt open-source models to your unique dataset without requiring dedicated infrastructure.

Conclusion

Hugging Face Ollama represents a significant step forward in democratizing access to large language models. Its simplicity, cost-effectiveness, and broad model support make it an invaluable tool for machine learning practitioners of all levels. From streamlining deployment to enabling local execution, Ollama empowers developers to leverage the power of LLMs without the traditional hurdles. By understanding how to install, configure, and integrate Ollama with Python, you can dramatically accelerate your machine learning pipelines, unlock new possibilities for experimentation, and reduce costs. Embrace Ollama and unlock the full potential of LLMs for your next machine learning project! Explore the Hugging Face Hub for an expansive model selection, and don’t hesitate to experiment to discover the best LLM for your specific needs.

Image by: Google DeepMind