Mastering Ollama Models for High-Impact Content Creation

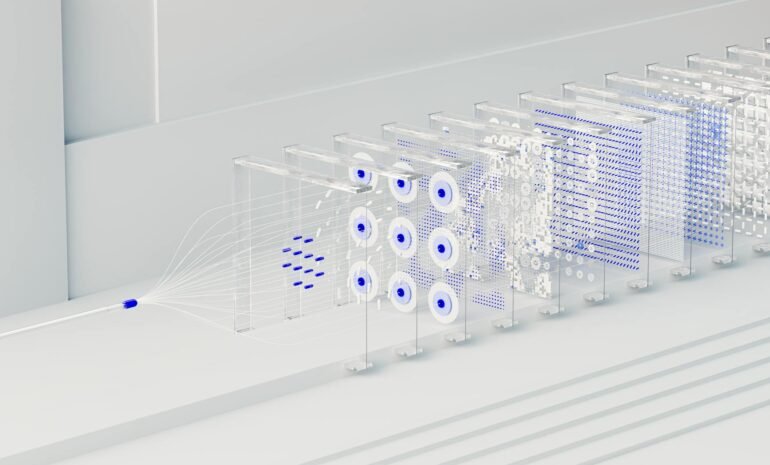

Are you ready to unlock the potential of powerful language models without the complexity of traditional APIs? In today’s rapidly evolving digital landscape, creating compelling content is more crucial than ever. But accessing and utilizing advanced AI models can feel daunting. This article will guide you through mastering Hugging Face Ollama, a user-friendly platform for running these models locally. We’ll cover everything from initial setup to leveraging Ollama for various content creation tasks. You’ll learn how to easily install, manage, and utilize a vast collection of powerful models to boost your productivity and achieve remarkable results – all without needing expensive cloud infrastructure. Prepare to elevate your content game!

Getting Started with Ollama: A Simple Setup

Ollama simplifies the process of running language models on your local machine. Unlike cloud-based solutions, Ollama offers a fast, private, and cost-effective way to experiment with and deploy AI models. The initial setup is straightforward and requires minimal technical expertise. This local execution comes with benefits like data privacy and faster response times compared to accessing models via external APIs.

Installation and Basic Usage

The installation process is streamlined and adaptable to different operating systems. Simply follow the instructions on the official Ollama website – [https://ollama.com/](https://ollama.com/). These instructions are available for macOS, Linux, and Windows. Once installed, you can start pulling models from the Ollama Hub. The Hub offers a diverse selection of models, ranging from powerful general-purpose models like Mistral to specialized models fine-tuned for specific tasks.

Installing a Model

To install a model, use the command-line interface (CLI). For example, to install the `mistral` model, type: `ollama pull mistral`. This downloads the model weights and prepares them for use. You’ll immediately see a progress bar indicating the download status. After the download completes, you can start interacting with the model.

Leveraging Ollama for Content Generation: Text, Code, and More

Ollama’s power lies in its versatility. You can leverage it for a wide range of content creation tasks, including writing blog posts, generating marketing copy, coding assistance, and even brainstorming ideas. The simple CLI allows for intuitive prompt engineering and experimentation.

Creative Writing and Storytelling

Ollama models excel at creative writing. You can provide a prompt – a starting sentence, a few keywords, or a character description – and the model will generate coherent and imaginative text. Experiment with different prompts and model parameters (temperature, top_p) to fine-tune the output and achieve the desired style and tone. For instance, you could ask it to “Write a short story about a robot discovering the meaning of friendship.” The quality of the output depends on the model you choose and the clarity of your prompt.

Code Generation and Assistance

Many models are specifically fine-tuned for code generation. This makes Ollama an invaluable tool for developers. You can ask the model to write functions, complete code snippets, or debug existing code. The output is typically in a format compatible with popular programming languages like Python, JavaScript, and C++. This significantly speeds up the coding process and helps developers overcome roadblocks. For example, you could ask it to “Write a Python function to calculate the factorial of a number.” This can dramatically reduce development time.

Example: Generating Marketing Copy

Let’s say you need catchy headlines for a new product launch. You can use Ollama to generate a variety of options. You could provide a brief description of the product (e.g., “A revolutionary noise-canceling headphone”) and ask the model to generate ten different headlines. The model will output a list of potential headlines, each tailored to grab attention and communicate the product’s value proposition.

Optimizing Prompts for Best Results with Ollama

The quality of your prompts directly impacts the output you receive from Ollama models. Effective prompt engineering is crucial for unlocking the full potential of these powerful tools. Here are some tips for crafting effective prompts:

- Be Specific: The more details you provide, the better the model can understand your intent.

- Define the Output Format: Specify the desired length, tone, and style of the output.

- Use Keywords: Include relevant keywords to guide the model’s thinking.

- Provide Context: Give the model sufficient background information to understand the task.

- Experiment with Temperature and Top_P: These parameters control the randomness of the output. Lower values produce more predictable results, while higher values produce more creative results. (Refer to the model’s documentation for details.)

By following these guidelines, you can significantly improve the quality and relevance of the content generated by Ollama.

Integrating Ollama into Your Workflow: Productivity Boost

Ollama isn’t just a tool for generating content; it’s a productivity enhancer. Once you’ve mastered the basics, you can integrate it into your existing workflow to streamline your tasks. You can use it as a quick brainstorming partner, a code assistant, or a creative writing aid. The local execution of models eliminates the need for constant API calls, saving you time and money. Furthermore, the privacy benefits are invaluable, especially when dealing with sensitive data.

Automating Content Creation

You can use scripting and automation tools to integrate Ollama into your content creation pipeline. For example, you could write a script to automatically generate social media posts based on a set of keywords or to generate code snippets for specific tasks. This automation frees you up to focus on higher-level tasks, such as editing and refining the generated content.

Customizing Models with Fine-tuning (Advanced)

For advanced users, Ollama supports fine-tuning models on your own data. This allows you to tailor the model’s behavior to your specific needs. This process requires more technical expertise but can significantly improve the quality and relevance of the output for specialized tasks. The Ollama documentation provides detailed instructions on fine-tuning models.

| Feature | Description |

|---|---|

| Model Variety | Ollama offers a wide array of models, ranging from powerful general-purpose models to specialized models for specific tasks. |

| Local Execution | Models run locally, providing data privacy and faster response times. |

| Ease of Use | Simple command-line interface simplifies model installation and usage. |

| Integration | Can be integrated into existing workflows for automation and productivity. |

Conclusion: Unlock the Potential of Local AI with Ollama

Ollama represents a significant step forward in democratizing access to powerful language models. It’s a simple, efficient, and cost-effective way to run advanced AI on your own hardware. By mastering Ollama, you can dramatically enhance your content creation capabilities, accelerate your development workflow, and unlock new levels of productivity – all while maintaining data privacy. The benefits extend far beyond simple text generation; with the right prompts and integration, Ollama can become an indispensable tool for any content creator, developer, or anyone seeking to leverage the power of AI. Embrace the local AI revolution and start exploring the possibilities today.

Image by: General Kenobi